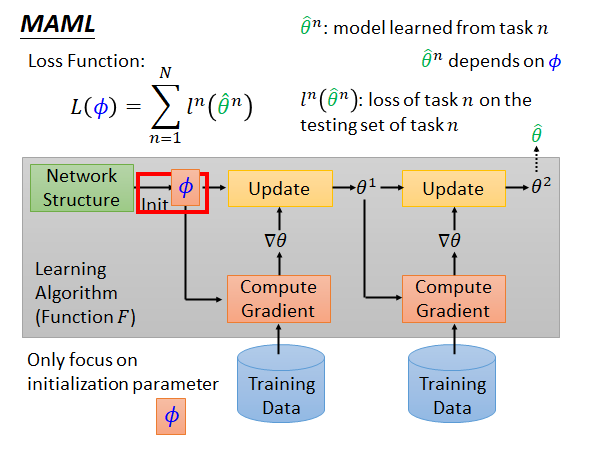

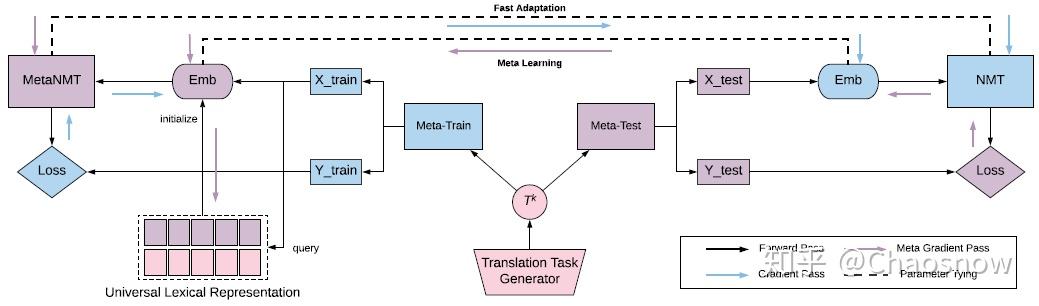

We showĮmpirically that our proposed meta-learning method learns TSR with few dataįast and outperforms the baselines in 9 of 12 experiments. Model-agnostic meta-learning (MAML) has emerged as one of the most suc- cessful meta-learning techniques in few-shot learning. Measurements, heart-rate sensors, and electrical battery data. Finally, weĪpply the data to time series of different domains, such as pollution Global information of the time series to extract meta-features. To new short-history time series by modifying the original idea of ModelĪgnostic Meta-Learning (MAML) \cite, we propose a method forĬonditioning parameters of the model through an auxiliary network that encodes In this paper, we willĮxplore the idea of using meta-learning for quickly adapting model parameters Information across time series to improve learning. Berkeley Artificial Intelligence Research blog. Higher by Facebook research TorchMeta Learn2learn Blogs. Therefore, it is important to make use of Hands-On Meta Learning with Python: Meta learning using one-shot learning, MAML, Reptile, and Meta-SGD with TensorFlow, (2019), Sudharsan Ravichandiran. These models sometimes need a lot ofĭata to be able to generalize, yet the time series are sometimes not longĮnough to be able to learn patterns. Our theoretical analysis improves the optimization theory for MAML, and our empirical results corroborate our theoretical findings.Download a PDF of the paper titled Multimodal Meta-Learning for Time Series Regression, by Sebastian Pineda Arango and 3 other authors Download PDF Abstract: Recent work has shown the efficiency of deep learning models such as FullyĬonvolutional Networks (FCN) or Recurrent Neural Networks (RNN) to deal with Moreover, we introduce a communication-efficient memory-based MAML algorithm for personalized federated learning in cross-device (with client sampling) and cross-silo (without client sampling) settings. The proposed algorithms require sampling a constant number of tasks and data samples per iteration, making them suitable for the continual learning scenario. Meta-learning achieves this ability by learning useful inductive biases so that a model can adapt quickly from a few examples. To address these issues, this paper proposes memory-based stochastic algorithms for MAML that converge with vanishing error. Introduction Humans can learn new concepts with limited examples, which is also desirable for artificial agents. Nonetheless, these algorithms either fail to guarantee convergence with a constant mini-batch size or require processing a large number of tasks at every iteration, which is unsuitable for continual learning or cross-device federated learning where only a small number of tasks are available per iteration or per round. The work 3 proposed a model-agnostic meta-learning (MAML) approach, which is an initial parameter-transfer methodology where the goal is to learn a good. Figure 24.1: Meta Learning principle 1. By drawing upon implicit differentiation, we develop the implicit MAML algorithm, which depends only on the solution to the inner level optimization and not the. Therefore we want to optimize for a pre-trained model that can adapt to a variety of tasks in a few gradient steps.

Existing MAML algorithms rely on the “episode” idea by sampling a few tasks and data points to update the meta-model at each iteration. Meta-learning is a research field that attempts to equip conventional machine learning architectures with the power to gain meta-knowledge about a range of tasks to solve problems like the one above on a human level of accuracy. Model-Agnostic Meta-Learning (MAML) extends this idea and tries to do multi-task learning by fine-tuning. However, the stochastic optimization of MAML is still underdeveloped. In recent years, model-agnostic meta-learning (MAML) has become a popular research area. Although MAML uses standard gradient descent for inner- loop optimization, recent works demonstrate that an improved inner-loop optimization can positively. Memory-Based Optimization Methods for Model-Agnostic Meta-Learning and Personalized Federated Learningīokun Wang, Zhuoning Yuan, Yiming Ying, Tianbao Yang 24(145):1−46, 2023.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed